Writing this, 2018 is almost over. Personally and in career terms it has been interesting for me. Time to stop, and think about technologies that I have used this year. I’ve structured this review into sections that group all similar technologies together.

Ruby

Most of my developing time I am still using Ruby on Rails. If feels extremely productive and mature. In comparison to more “modern” ecosystems there are rarely major breaks between version upgrades but enough choices for production ready community code. Thus, most of our apps are already on the newest Rails 5.2 running Ruby 2.5. Also, having a monoculture of a framework is productive for the team, as any member can get up to speed with any project technology wise in short time.

Still, even stable, there are new framework features that we introduced this year. We had the chance to experiment with two new Rails core frameworks that are either integrated into Rails or coming soon (Rails 6), ActiveStorage and Webpacker. Besides that, we streamlined our test suite and changed most acceptance tests to run in Selenium Firefox (headless), after PhantomJS has been deprecated. We have also have been using Chrome headless, but after having regularly random test failures, especially after Chrome(-driver) version changes, we switched to Firefox and never looked back.

ActiveStorage

ActiveStorage, a newish adapter to handle uploads, has been introduced into Rails core at Rails 5.1. As it is still young, there is a lot of movement in the code base and some parts are still missing, like validations or different storage configuration per model. Still, if the requirements matched, it has been a pleasure using it. Knowing it integrated into Core, makes me eager to using it more in the future. So, the community can standardize on fewer ways for file uploads and deliver more value by joining forces. AS seems to be a good start, as using different adapters by default is very easy to manage.

Webpacker

The other big integration into a majority of our project has been the “webpacker” Gem. We’ve been using it almost since version 1 released March 2017. Thus, we had some pain upgrading (or still waiting to upgrade to WP 5). There is definitely a learning curve for Webpack, but in the end it is so much more powerful than vanilla asset pipeline. The webpacker gem itself is very nice to use and we had few problems with the Rails integration. But be sure to check out the source code, especially of the accompanied npm package that deliver most of the work.

I can not think of going back to a manual unmoduled approach, when developing more and more frontend applications or components. I still think that Rails on the backend and generating many pages on the server side, but there is definitely the demand for more interesting web applications. Most apps can benefit from sprinkeled components that improve sections of important screens.

JavaScript

This year I wrote more JavaScript than any year before. Mostly, It have been by using Vue.js in the shape of single file components with babel.js / EcmaScript2017+. After starting with AngularJS 4 years ago, trying Angular and React (Native) in the mean time, I arrived at Vue.js, which I fell in love since version 1.0. In my opinion in combines the best things from the old AngularJS - approachable template syntax, separation of template + “controller”, “just works” data binding - and React - component focused development with central store pattern. All that, without forcing you to apply a vocabulary that might be alien to you, especially for newer developers (Angular: Provider, “Services”, DI; React: Redux stuff, like Reducer, Thunk, Action Creator, Saga, etc.). Vue allows a gradual adjustment of the framework to your app; you can use it almost AngularJS style letting it parse and take over all your site, or you can use functional components and never see any HTML and define all elements by createElement.

Typescript is the part, I am still missing. As Vue 3 will be written in Typescript, that would be surely my main learning area in 2019 frontend wise.

DevOps

Terraform

One of the discoveries in 2018 has been Terraform. I’ve mainly used it to reproducibly set up S3 containers with the right permissions, encryption etc. I also played around with Route53 domain names, SES, Hetzner Cloud and an Openstack (OVH) provider. Having a static validation of one’s configuration before run is reassuring. In comparison to Ansible, which could be used for similar tasks, it is much faster in execution and also more safe. It can detect changes/deviations in the infrastructure from the plan and only apply necessary changes, even in parallel when possible. Also having another part of the server side “in code” and under version control bridges the missing part, when one set’s up DNS, order new servers, links VPC etc.

Ansible

For all of our deployments, Ansible is still king. While using it in the last couple of years, we developed a role repository that allows us to set up a new root server with containers, Rails, ssl terminating load balancer, Bastion etc. in a matter of hours with very little code (just wait for execution), as well as deploy completely new apps to a container deployment (LXC) in less than 1h. Ansible also reached a stable level after 2.x now. Our Rails provisioning playbook allows for attaching a production console for admins, ad-hoc deployment of individual branches, inline Ruby version upgrades, Sidekiq systemd jobs, Mailroom IMAP mail receiving, Webpack/NodeJS, central virus scanner (Clamd). We open sourced several of that roles into the pludoni Github group, and are using and contributed to the Dresden Weekly Ansible repository.

Docker & Cloud

At the moment, we are using Docker only for development and CI purposes (and for running the Ansible deployment). Migrating a grown Rails app to Docker is not always trivial, as stateful things like uploads, the database itself and database migrations must be integrated and well tested. This might be worth an excursion for one or two of our simpler apps. No app of us justifies the load to run a orchestrated cloud container setup, like Kubernetes, GKS, from the beginning. Root servers are extremely powerful and cost efficient right now, as a typical Hetzner style 32GB coreI7 SSD will run circles around most cloud instances or services for a fraction of the cost. We rather safe that money on our infrastructure bill, as having a similar setup on AWS would be a big multiple of our current spending, even not failover/HA included.

Caddy

Another nice discovery was using Caddy as a intermediate Web-Server. The “Auto”-Letsencrypt feature and a extremely readable config format has us lured in and we tried this service as a Nginx replacement for development and later on one production server. Having less configuration has the advantage to not worry about some details, like correct TLS settings, Letsencrypt certificate renewal, correct proxy settings for e.g. Websockets etc. There might be some things that are harder to achieve, like configurable http basic auth. But overall the experience has been very nice.

Database layer

Not much new in this section. We are still running mostly PostgreSQL as the primary database storage for most of our apps and all new apps. Having USP features, like a jsonb datatype, CTE’s, table migrations also wrapped in transactions, datatypes like UUIDs, IP addresses as well as a good performance, and always consistent storage is a win. Most apps also connect to a Redis instance, mainly for Sidekiq or as caching backend, rarely also as backend for message_bus (alternative to ActionCable). Our various job or content search is backed by Elasticsearch.

As a data pattern we are more and more convinced by having a system that primarily stores events (think: DDD, CQRS, Event-Store), where all events are first persisted with the raw input data and then asynchronously processed with separated error handling. Thus, we can understand how specific objects resulted in the current state and can trace undefined conditions that are not spec’d at the beginning. That came especially in handy when we planned and implemented our ATS (applicant tracking system), where more and more state transitions appeared that we might not have thought of before.

Another side note goes to Firebase’s Firestore, that we are using for storing user preferences for our HRfilter app, but we haven’t used a lot of it’s async features. It just happens, that the MBaaS (Mobile backend as a service) integration into Flutter was extremely easy and straight forward to use.

Cloud

As written before in the Terraform section, we dipped our toes into AWS this year. I’ve already been using S3 privately with S3 and Image Recognition. As we developed the ATS, we were on the look out for a scalable storage solution that can be used by ActiveStorage. After discarding other storage providers, we landed on S3 that provides all the features we want (Encryption, CORS, storage in Germany). As industry leade,r AWS also enjoys the best 3rd party support, so also ActiveStorage integration has been straight forward. In comparison to e.g. an Openstack solution we tried, where we did not find any examples and run into problems that you seem to be the first one to have them.

In the future we like to move part of our domain hosting to AWS Route53 or OVH DNS, too, so we can take advantage of a configurable API that allows us to deploy new websites with a standard set of records. Also running part of our outgoing email infrastructure over SES is interesting.

App development

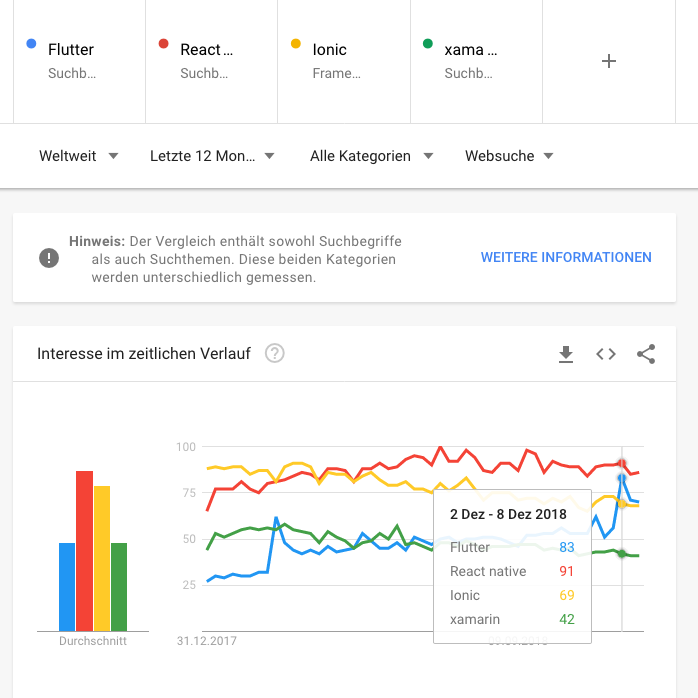

2018 has also been the year of the rise of Flutter app development. The final 1.0 version has been released on 4th December. I’ve been starting to use Flutter in this summer and was immediately blown away by it’s features and quick development work flow. Having used a little React Native in 2016/2017 and Ionic before that, Flutter offers a much more stable experience.

In comparison to React native, Flutter comes with “batteries included”, and offers 1st party support for a multitude of widgets and integrations. I had nightmares of choosing a React native navigation bar solutions in beginning of 2017, so many different solutions that all “kind of worked” and had each its own drawbacks.

I was especially surprised by the easiness of getting started when using Visual Studio Code (vscode) in combination with live reloading in a emulator or on the live phone, much alike to React Native before. Dart, as a typed compiled language, offers very good autocompletion and documentation support. Dart itself is relatively easy to learn, if one has ever used a static typed language before. In comparison to React and the JS ecosystem it feels more cohesive, as there are not 3 different plugins for each problem where 2 are abandoned or do not work.

At the moment, there are two apps by me in the Play store and 1 in the Apple App Store. Both are obviously based upon the same code basis, that is:

- HRfilter - news aggregator about HR/personal/recruiting news sources

Play store App Store - Fahrrad-Filter - news aggregator about bike related topics

Play store

Which are both frontends for the respective aggregator backend.

Editor / Tooling

Still using console Vim in the form of Neovim. With plugins like ALE or coc there is also some decent IDE support that helps in day-to-day work. I am working mostly on a remote development server with a ever running TMUX session and nvim inside.

As mentioned in the Flutter section, I’ve also come to know to VScode that I prefer for Dart, as the autocompletion and Flutter integration is superb.

This year I to replace RVM with asdf, which offers a unified experience to manage both Ruby and NodeJS versions (as well as a lot of other languages) on a per-project basis.

Project management / Services

Gitlab

After introducing Gitlab to the whole (non-dev) team at the end of 2017 year, we are very happy with the self hosted version of Gitlab. The “omnibus” installation worked between all upgrades flawlessly, and the team now on the most part got used to interacting with issues instead of sending 1-1 messages for bugs, feature requests, discussions. To help with the adoption on the developer side, we are using the time tracking feature extensively. I’ve built a custom CLI tool which interacts with Gitlab and can be used to start/stop time tracking, checkout issue branches, create issues on the fly etc.

Having most of the feature communication and documentation on Gitlab helps when running a remote team, so that issue progress is transparent and reporting / tracking is simplified. For our team’s development goals, I’ve built tools that connect to Gitlab API to generate a Development Roadmap for each quarter, exporting the time tracking data into another system, or analyze developer activity.

Mattermost

Also shipped with the Gitlab omnibus installation is the chat tool Mattermost. Previously, we have been using HipChat before it has been sold to Atlassian. After Mattermost got integrated into Gitlab, we decided to try it out and never looked back. We are running some integrations, like pushing our Application Errors (via Errbit) and Monitoring alerts into relevant channels. The one thing that is missing from Mattermost is a Video chat solutions. There has been some beta feature where you can start 1-1 WebRTC calls, if you managed to set up a WebRTC service for getting around the NAT problem of peer-to-peer communications. Unfortunately, it never worked very stable and was removed in a newer versions. Mattermost now suggests using a third party integration like Zoom.

For ad-hoc video chat, we are using Appear.in in the development team and rarely Skype. As we mostly are using the same development server, we can just use TMux for working together and sifting through the code or when mentoring. The only problem that might occur is, when the other peer’s Vim or Tmux config differs too much from one’s own :’D

GDPR / DSGVO

A big issue for the whole industry has definitely been the introduction of the GDPR law in the European Union on end of May. It took some resources from our team to build relevant features, like checking all required information, having PII deletion work flows, building a GDPR generator for our various services, or a data-processing agreement generator for our customers.

But it also helped to start discussion about tracking in general and what kind of metrics we need as company anyway. Even before, there are only 2 occurrences of long term cookies that we are used, one is just a “keep me logged in” function. The real big question mark was and currently still is Google Analytics. For our customer and sales communication we need some kind of usage metrics. But since the introduction of the GDPR, we experienced an extreme drop in those numbers (clicks/sessions) which do not correlate to our business metrics (sent out job applications). Thus, we decided to replace Google Analytics with our own click system where we have full control over any aspect.

We introduced that system recently on all platforms. The tracking itself is based upon the awesome Rubygem Ahoy and is synchronized to a central system that provides data warehousing capabilities. The ip address is also anonymized and no Cookie is needed (with the risk and drawback of fuzzying results when people are using the same IP + Browser at the same time). We do a very rigorous bot detection, and only track if the client is not in a known “data center” via IPCat, uses JavaScript, and the behaviour is not obviously abusive (SQL injection pattern, unrealistic number of clicks in a time frame etc.). At the moment we are satisfied with the results. I am looking forward to remove GA fully in 2019 after a test phase.

Stuff

A little unrelated shout out for resources that I’ve been recommending a friend when developing a personal website, where you want to have some kind of visuals without breaking the bank.

Unsplashed: A no fuzz website with a good set on “Hero” images that might be used for blog articles, no fake “free download” button with credit card registration etc. But please don’t over (ab)use it :)

Noun project: Having Icomoon, Materialize Icons and Font-Awesome is already great when needing some application icons. But in some cases, one likes to dive deeper into very specific topics. I did it for my “Fahrrad-Filter” App, where I wanted to include very specific bike related icons for the categories. This websites offers a lot of community icons. The pricing is very fair, or one can decide to use the Creative Commons license with attribution.